The bottleneck isn't building.

It's knowing what works.

The experiment orchestration layer for AI teams. Remyx learns your codebase, recommends what to try next, and turns every result into context for the next decision.

AI development is an empirical discipline

Remyx operationalizes the scientific method for AI engineering, managing the full cycle so every stage is instrumented and every decision is preserved.

Idea to Production, Systematically

Experiment with confidence.

Integrate discovery, building, and validation.

Hypothesize

Get recommendations grounded in your codebase, experiment history, and recent advances.

Implement & validate

Coding agents and your tools do the work. Remyx ties every metric, commit, and ticket back to the hypothesis it tested.

Decide & iterate

Capture the decision and why you made it. Each outcome, positive or negative, narrows the search.

Works with your stack

Remyx connects the tools your team already uses into a single experiment record. More integrations ship every month.

LaunchDarkly

and more

LaunchDarkly

and more

Learn what works.

Remyx helps engineers test more ideas and helps leads know which ones drive improvements.

For AI Engineers

Remyx understands your codebase from day one. Spend your time running experiments, not piecing together what the team already knows.

Hypotheses recommended from evidence

One integrated flow

Prior experiment context surfaces automatically

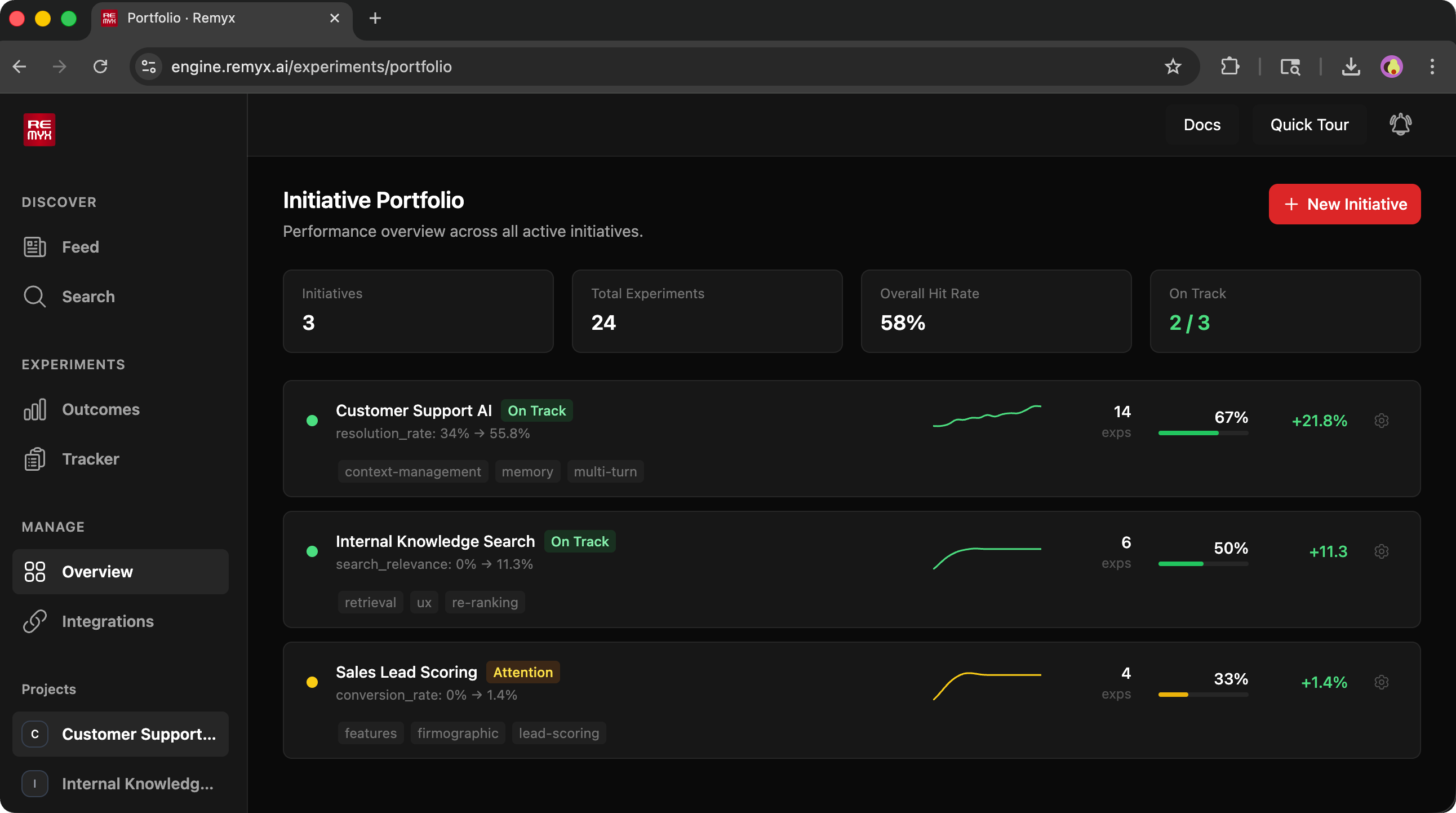

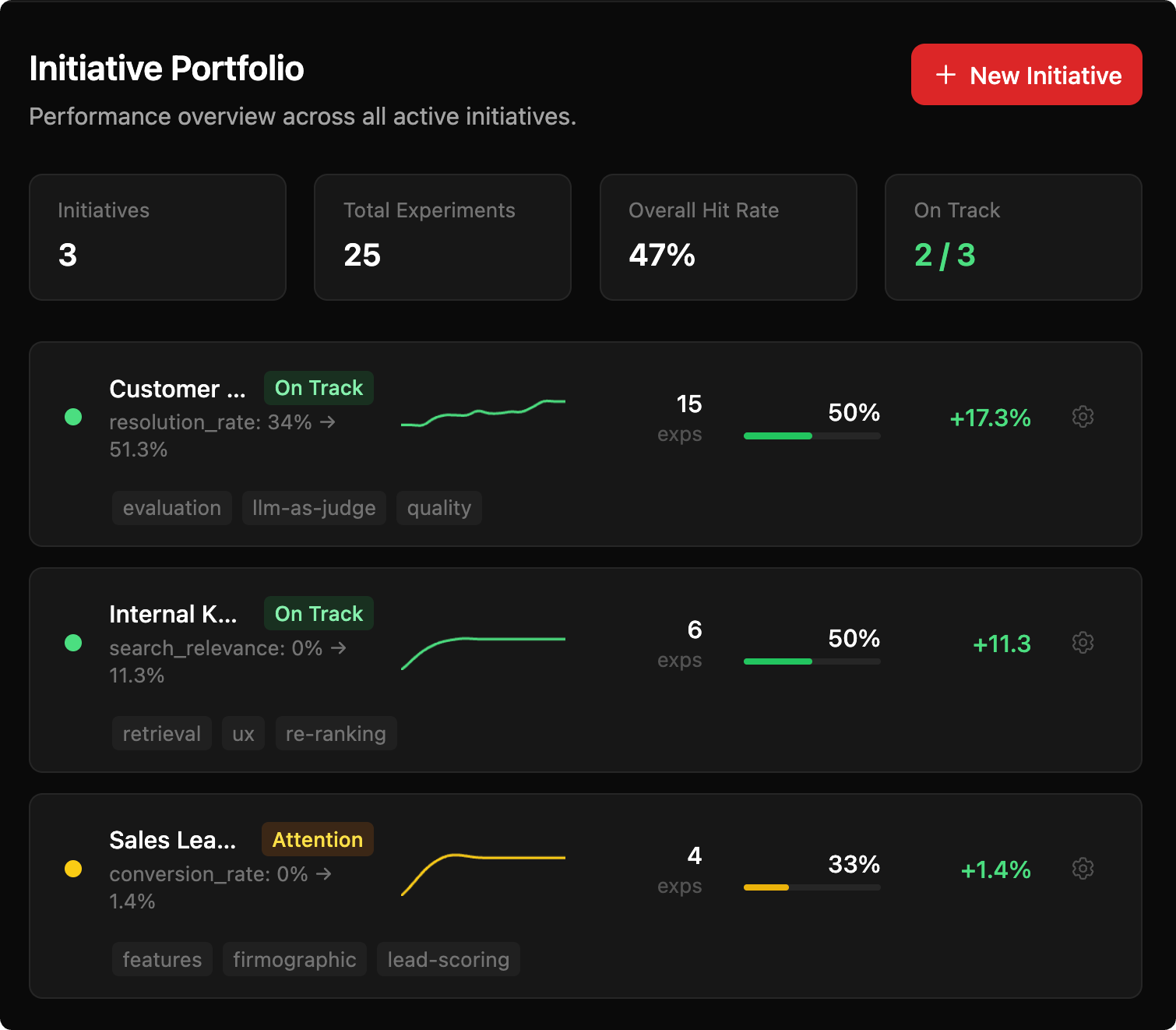

For Team Leads

A portfolio view across every active experiment. Every decision, trajectory, and pending call is visible without interrupting your team's flow.

A single portfolio view

Structured disposition on every call

A single source of truth

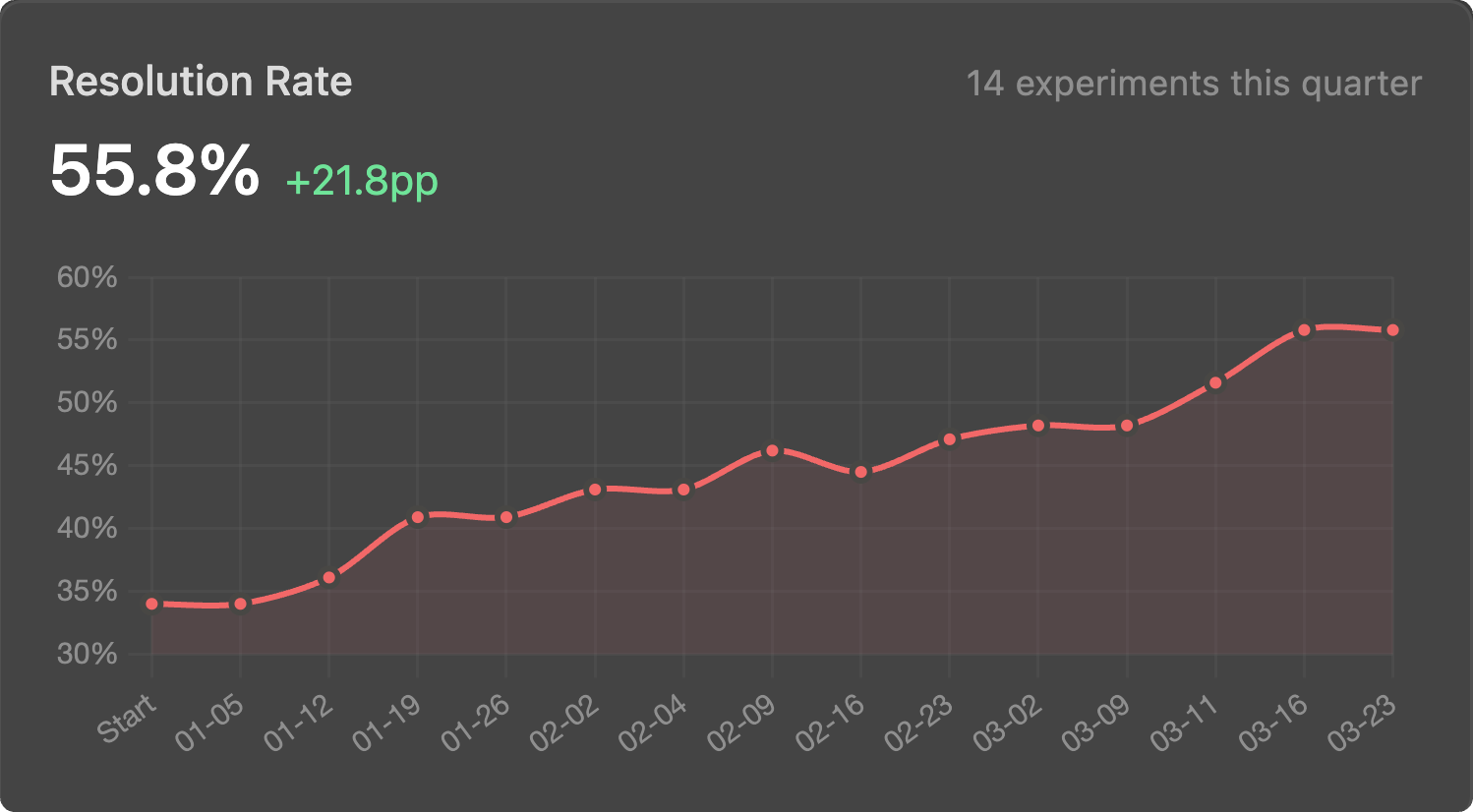

Each experiment lends more evidence to refine your next hypothesis

The space of possible improvements is too large to try everything. Remyx helps your team start from relevant prior work and build on what you learn.

Every experiment is a decision: a hypothesis, a result, a rationale.

Experiment orchestration keeps that record compounding instead of evaporating, so your codebase evolves on evidence.

Built by practitioners, for teams building at the frontier

A team of mathematicians and award-winning ML innovators with a decade of experience applying AI in robotics, healthcare, content recommendation, and enterprise data/ml infrastructure.

Applied Mathematics, UC Berkeley. Former Solutions Architect at Databricks advising MLOps strategy from startups to Fortune 500. Award-winning ML innovator recognized by NVIDIA's developer community.

UC Berkeley. 10+ years applying ML in healthcare, robotics, and content recommendation at Riot Games, Tubi, Robust.AI. Open-source tools cited by Google DeepMind and used in peer-reviewed research.

Talks, Pods & Writing

Conference talks, podcast conversations, and field notes on how AI teams go from experiment to production.

Active in the AI Community Open Source

We contribute open-source tools, datasets, and benchmarks across AI domains and the research community builds on them.